Light differentiable logic gate networks

Abstract

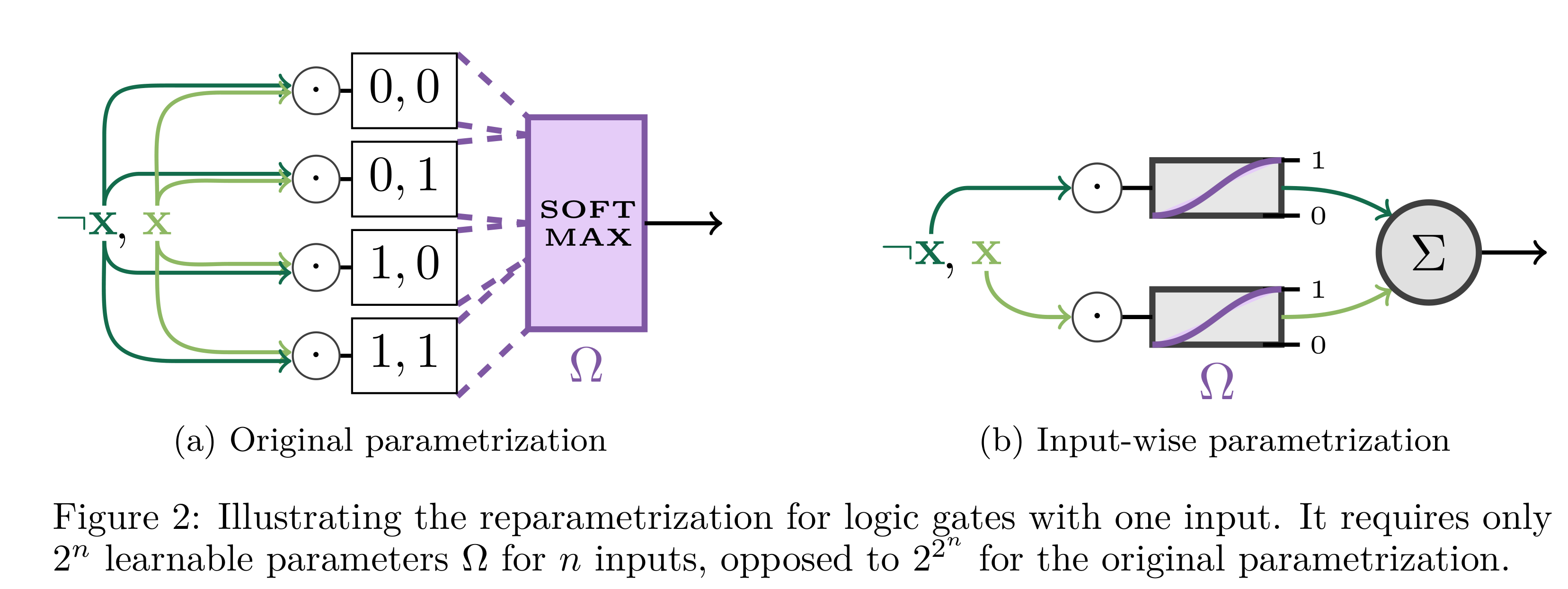

Differentiable logic gate networks (DLGNs) exhibit extraordinary efficiency at inference while sustaining competitive accuracy. But vanishing gradients, discretization errors, and high training cost impede scaling these networks. With our reparametrization, we tackle these shortcoming and make DLGNs more scalable in depth and complexity. The implementation of our reparametrization is available at GitHub.

Presentation

To navigate through the slides yourself, open the presentation here.